mall makes use of Massive Language Fashions (LLM) to run

Pure Language Processing (NLP) operations in opposition to your knowledge. This package deal

is offered for each R, and Python. Model 0.2.0 has been launched to

CRAN and

PyPi respectively.

In R, you may set up the newest model with:

In Python, with:

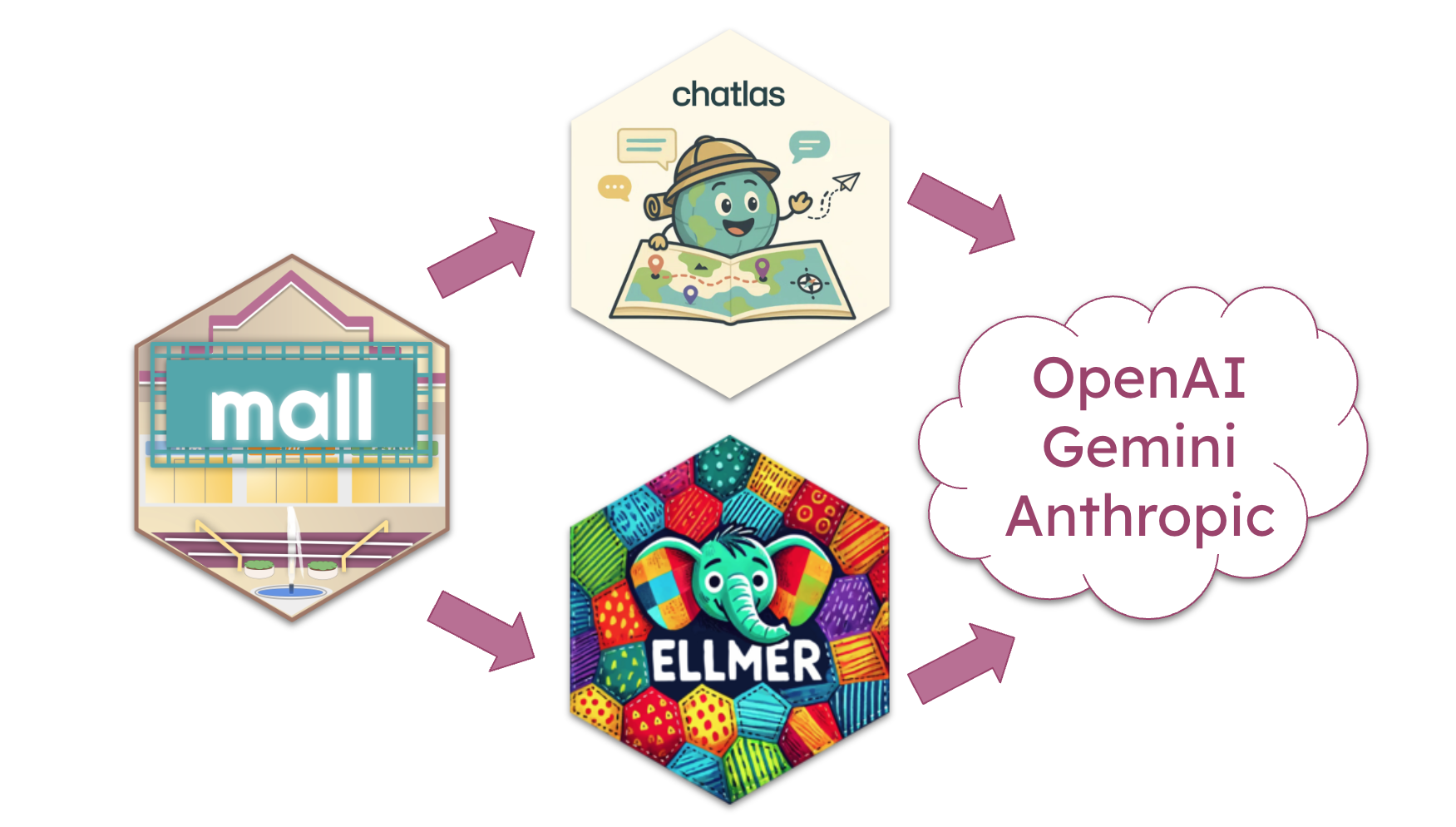

This launch expands the variety of LLM suppliers you need to use with mall. Additionally,

in Python it introduces the choice to run the NLP operations over string vectors,

and in R, it allows assist for ‘parallelized’ requests.

It’s also very thrilling to announce a model new cheatsheet for this package deal. It

is offered in print (PDF) and HTML format!

Extra LLM suppliers

The most important spotlight of this launch is the the power to make use of exterior LLM

suppliers equivalent to OpenAI, Gemini

and Anthropic. As an alternative of writing integration for

every supplier one after the other, mall makes use of specialised integration packages to behave as

intermediates.

In R, mall makes use of the ellmer package deal

to combine with quite a lot of LLM suppliers.

To entry the brand new function, first create a chat connection, after which go that

connection to llm_use(). Right here is an instance of connecting and utilizing OpenAI:

set up.packages("ellmer")

library(mall)

library(ellmer)

chat <- chat_openai()

#> Utilizing mannequin = "gpt-4.1".

llm_use(chat, .cache = "_my_cache")

#>

#> ── mall session object

#> Backend: ellmerLLM session: mannequin:gpt-4.1R session: cache_folder:_my_cacheIn Python, mall makes use of chatlas as

the mixing level with the LLM. chatlas additionally integrates with

a number of LLM suppliers.

To make use of, first instantiate a chatlas chat connection class, after which go that

to the Polars knowledge body by way of the

pip set up chatlas

import mall

from chatlas import ChatOpenAI

chat = ChatOpenAI()

knowledge = mall.MallData

opinions = knowledge.opinions

opinions.llm.use(chat)

#> {'backend': 'chatlas', 'chat': Connecting mall to exterior LLM suppliers introduces a consideration of price.

Most suppliers cost for the usage of their API, so there’s a potential {that a}

giant desk, with lengthy texts, might be an costly operation.

Parallel requests (R solely)

A brand new function launched in ellmer 0.3.0

allows the entry to submit a number of prompts in parallel, somewhat than in sequence.

This makes it quicker, and doubtlessly cheaper, to course of a desk. If the supplier

helps this function, ellmer is ready to leverage it by way of the

parallel_chat()

operate. Gemini and OpenAI assist the function.

Within the new launch of mallthe mixing with ellmer has been specifically

written to make the most of parallel chat. The internals have been re-written to

submit the NLP-specific directions as a system message so as

cut back the scale of every immediate. Moreover, the cache system has additionally been

re-tooled to assist batched requests.

NLP operations with out a desk

Since its preliminary model, mall has offered the power for R customers to carry out

the NLP operations over a string vector, in different phrases, while not having a desk.

Beginning with the brand new launch, mall additionally offers this identical performance

in its Python model.

mall can course of vectors contained in a listing object. To make use of, initialize a

new LLMVec class object with both an Ollama mannequin, or a chatlas Chat

object, after which entry the identical NLP capabilities because the Polars extension.

# Initialize a Chat object

from chatlas import ChatOllama

chat = ChatOllama(mannequin = "llama3.2")

# Cross it to a brand new LLMVec

from mall import LLMVec

llm = LLMVec(chat) Entry the capabilities by way of the brand new LLMVec object, and go the textual content to be processed.

llm.sentiment(["I am happy", "I am sad"])

#> ['positive', 'negative']

llm.translate(["Este es el mejor dia!"], "english")

#> ['This is the best day!']For extra data go to the reference web page: LLMVec

New cheatsheet

The model new official cheatsheet is now obtainable from Posit:

Pure Language processing utilizing LLMs in R/Python.

Its imply function is that one facet of the web page is devoted to the R model,

and the opposite facet of the web page to the Python model.

An internet web page model can also be availabe within the official cheatsheet website

right here. It takes

benefit of the tab function that lets you choose between R and Python

explanations and examples.