On this article, you’ll learn to construct a beginner-friendly 2026 studying plan for giant language fashions (LLMs), from core ideas to scaling, rearchitecture, and sensible functions.

Matters we’ll cowl embrace:

- Foundational, conceptual, and hands-on sources for understanding LLMs.

- Guides for scaling and re-architecting LLMs to fulfill sensible constraints.

- Chosen analysis and domain-focused readings to broaden your perspective.

Let’s not waste any extra time.

A Newbie’s Studying Checklist for Massive Language Fashions for 2026

Picture by Editor

Introduction

The giant language fashions (LLMs) hype wave exhibits no signal of fading anytime quickly: in any case, LLMs hold reinventing themselves at a speedy tempo and reworking the business as a complete. It’s no shock that an increasing number of folks unfamiliar with LLMs’ internal workings need to begin studying about them, and what might be higher for setting off on a studying journey than a studying listing?

This text brings collectively an inventory of related readings to place in your radar in case you are starting on the planet of LLMs. Inserting particular deal with the fundamentals and foundations, the listing is supplemented by further readings that can assist you go the additional mile in necessary points like scaling and re-architecting LLMs. There’s additionally room for analysis research and application-oriented, domain-specific readings the place LLMs are the protagonists.

The 2026 LLM Studying Checklist You Have been Ready For

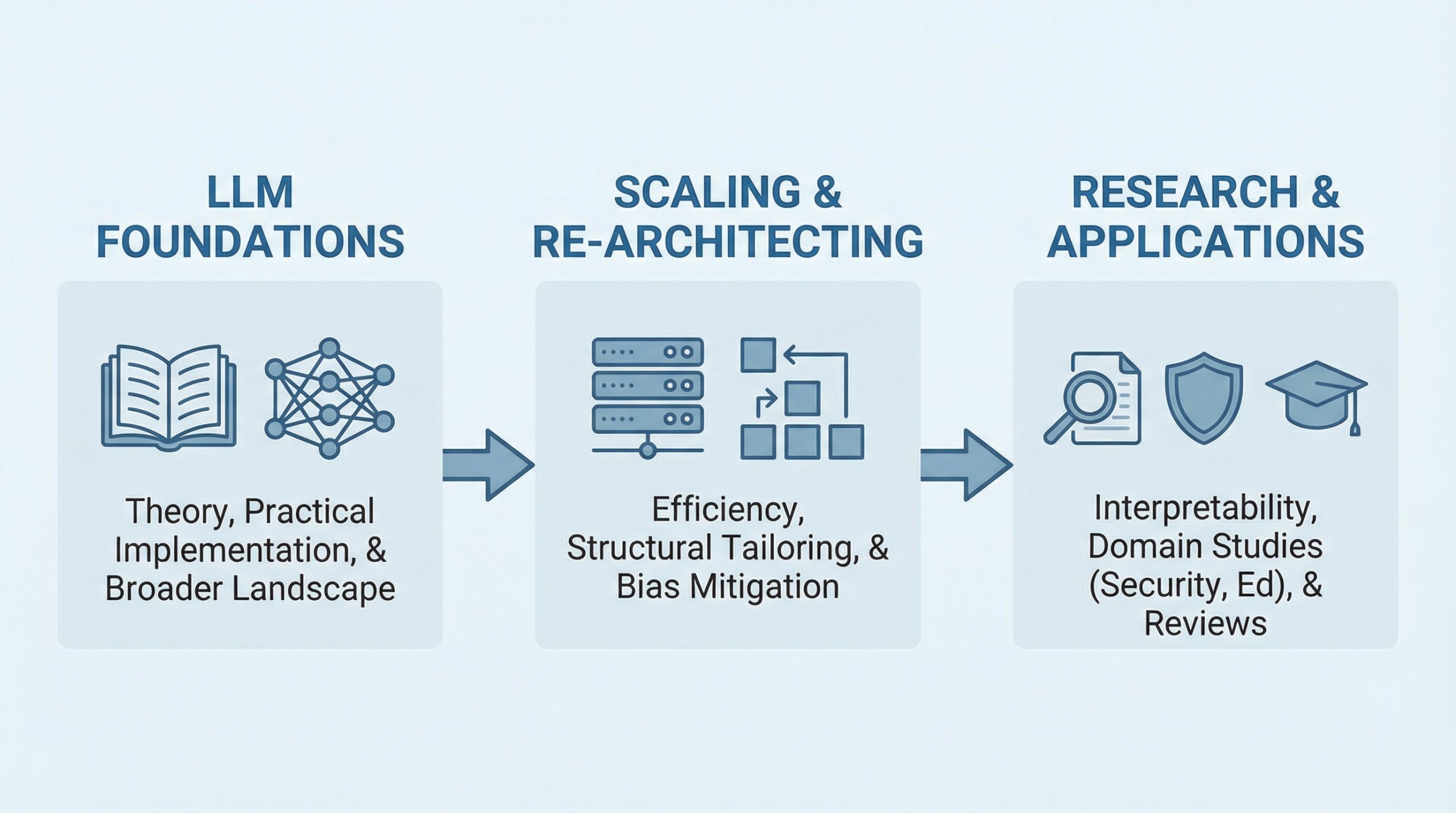

Beneath is the studying listing we curated for you, divided into three blocks.

For rookies in LLMs, the pure place to begin is to deal with the primary block — that’s, studying and buying the foundations of LLMs. As soon as you’re accustomed to these, relying in your preferences and wishes, proceed to both one of many different two blocks to deepen your data.

- Conceptual and Sensible LLM Foundations

- Scaling and Re-architecting LLMs

- Salient Analysis Research and Software-Oriented Texts

Conceptual and Sensible LLM Foundations

Don’t miss these three complete and open sources to accumulate a really strong basis on LLMs. They complement one another effectively, as you’ll discover!

One of many newest and most accessible sources to study the foundations of LLMs finish to finish is Tong Xiao and Jingbo Zhu’s eBook “Foundations of Massive Language Fashions“, at present accessible at arXiv. The guide is split into 5 pillars related to core ideas: pre-training, generative fashions, prompting, alignment, and inference. Really helpful for: profound theoretical understanding.

Pere Martra’s repository — and, particularly, his “Massive Language Mannequin Notebooks” course — is a superb and really complete, beginner-friendly useful resource to realize sensible data of LLM use and implementation. Creator of the acclaimed guide “Massive Language Fashions Tasks“, his repository gives loads of continuously up-to-date classes enriched with hands-on examples and Python notebooks. Really helpful for: sensible, hands-on studying.

One other studying useful resource value watching, attributable to being frequently up to date, is the freely accessible e book launch of Dan Jurafsky and James H. Martin’s “Speech and Language Processing” guide. Their work adopts a broader perspective, having a look not solely at LLMs but in addition at different associated and previous forms of fashions throughout the deep studying panorama. Each PDF and slideshow displays can be found for obtain, so you may study utilizing your favourite format. Really helpful for: a large look into half of the present AI panorama surrounding LLMs.

Scaling and Re-architecting LLMs

As soon as accustomed to the foundations, two notable LLM traits which might be gaining rising significance relate to constructing and sustaining fashions which might be scalable, in addition to re-architecting present LLMs to adapt them to particular wants or navigate challenges or limitations.

For scalability in LLMs, “ Scale Your Mannequin“, developed by Google DeepMind scientists, is a helpful studying useful resource relating numerous sensible points like TPUs, sharded matrices, the mathematics behind transformers, and lots of extra.

Relating to re-architecting LLMs — a vital subject that also has not gained enough consideration when it comes to accessible studying sources — it’s value mentioning Pere Martra’s intensive instructional work on LLMs once more, this time specializing in the newest, soon-to-be-available launch of his new guide “Rearchitecting LLMs: structural strategies for environment friendly fashions“. On the time of writing, you may learn the primary two chapters for gratis, each of them approachable and self-contained: why does tailoring LLM architectures matter? and an end-to-end architectural tailoring venture. Plenty of hands-on materials accompanies the theoretical notions and insights, after all.

Bias in LLMs, concretely in transformer architectures, is one other subject addressed in this learn from a practitioner’s perspective, exploring how inner neuron activations might help reveal subtly hidden biases and easy methods to act utilizing methods like pruning to optimize for bias-resilient, environment friendly fashions.

Salient Analysis Research and Software-Oriented Texts

Let’s wrap up with some examples of research-oriented research and application-oriented texts concerning the use and growth of LLMs.

- The textual content by Jenny Kunz gives a examine on understanding LLMs from the point of view of interpretability utilizing probing classifiers and self-rationalization.

- This edited Springer guide goes deep into LLMs within the area of cybersecurity, highlighting the importance of reshaping the sector and protection methods with LLM-driven functions and navigating associated challenges.

- Within the sector of training, LLMs have rather a lot to say, and this examine affords a complete evaluation of LLMs in studying environments.

- Final however not least, this learn gives an interdisciplinary evaluation of LLMs in quite a lot of utility fields.

Wrapping Up

This text put collectively an inventory of current, related readings appropriate for absolute rookies who need to familiarize themselves with LLMs in 2026. The listing is additional expanded with further reads to go deeper into this rising subject of synthetic intelligence.

NOTE: For sources the place the variety of authors or editors was giant, creator names haven’t been explicitly listed within the article for the sake of readability and brevity.