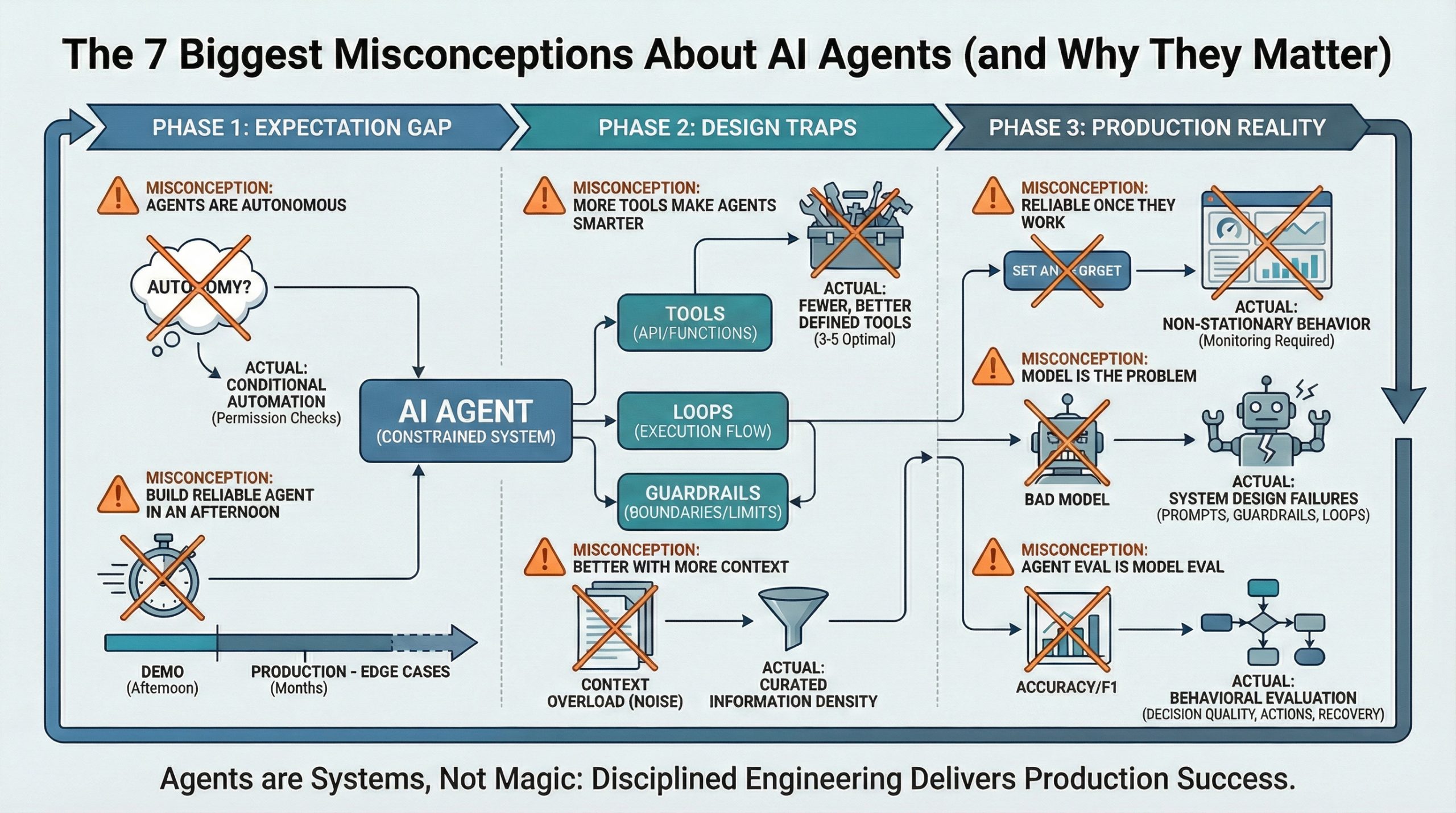

The 7 Greatest Misconceptions About AI Brokers (and Why They Matter) (click on to enlarge)

Picture by Writer

AI brokers are in all places. From buyer help chatbots to code assistants, the promise is easy: programs that may act in your behalf, making choices and taking actions with out fixed supervision.

However most of what folks imagine about brokers is improper. These misconceptions aren’t simply educational. They trigger manufacturing failures, blown budgets, and damaged belief. The hole between demo efficiency and manufacturing actuality is the place initiatives fail.

Listed here are the seven misconceptions that matter most, grouped by the place they seem within the agent lifecycle: preliminary expectations, design choices, and manufacturing operations.

Part 1: The Expectation Hole

False impression #1: “AI Brokers Are Autonomous”

Actuality: Brokers are conditional automation, not autonomy. They don’t set their very own objectives. They act inside boundaries you outline: particular instruments, fastidiously crafted prompts, and specific stopping guidelines. What seems like “autonomy” is a loop with permission checks. The agent can take a number of steps, however solely alongside paths you’ve pre-approved.

Why this issues: Overestimating autonomy results in unsafe deployments. Groups skip guardrails as a result of they assume the agent “is aware of” to not do harmful issues. It doesn’t. Autonomy requires intent. Brokers have execution patterns.

False impression #2: “You Can Construct a Dependable Agent in an Afternoon”

Actuality: You’ll be able to prototype an agent in a day. Manufacturing takes months. The distinction is edge-case dealing with. Demos work in managed environments with happy-path situations. Manufacturing brokers face malformed inputs, API timeouts, sudden instrument outputs, and context that shifts mid-execution. Every edge case wants specific dealing with: retry logic, fallback paths, swish degradation.

Why this issues: This hole breaks venture timelines and budgets. Groups demo a working agent, get approval, then spend three months firefighting manufacturing points they didn’t see coming. The exhausting half isn’t making it work as soon as. It’s making it not break.

Part 2: The Design Traps

False impression #3: “Including Extra Instruments Makes an Agent Smarter”

Actuality: Extra instruments make brokers worse. Every new instrument dilutes the chance the agent selects the precise one. Software overload will increase confusion. Brokers begin calling the improper instrument for a process, passing malformed parameters, or skipping instruments completely as a result of the choice house is just too giant. Manufacturing brokers work finest with 3-5 instruments, not 20.

Why this issues: Agent failures are tool-selection failures, not reasoning failures. When your agent hallucinates or produces nonsense, it’s as a result of it selected the improper instrument or mis-ordered its actions. The repair isn’t a greater mannequin. It’s fewer, better-defined instruments.

False impression #4: “Brokers Get Higher With Extra Context”

Actuality: Context overload degrades efficiency. Stuffing the immediate with paperwork, dialog historical past, and background data doesn’t make the agent smarter. It buries the sign in noise. Retrieval accuracy drops. The agent begins pulling irrelevant data or lacking important particulars as a result of it’s looking via an excessive amount of content material. Token limits additionally drive up value and latency.

Why this issues: Data density beats data quantity. A well-curated 2,000-token context outperforms a bloated 20,000-token dump. In case your agent’s making unhealthy choices, verify whether or not it’s drowning in context earlier than you assume it’s a reasoning drawback.

Part 3: The Manufacturing Actuality

False impression #5: “AI Brokers Are Dependable As soon as They Work”

Actuality: Agent conduct is non-stationary. The identical inputs don’t assure the identical outputs. APIs change, instrument availability fluctuates, and even minor immediate modifications may cause behavioral drift. A mannequin replace can shift how the agent interprets directions. An agent that labored completely final week can degrade this week.

Why this issues: Reliability issues don’t present up in demos. They present up in manufacturing, below load, throughout time. You’ll be able to’t “set and neglect” an agent. You want monitoring, logging, and regression testing on the precise behaviors that matter, not simply outputs.

False impression #6: “If an Agent Fails, the Mannequin Is the Downside”

Actuality: Failures are system design failures, not mannequin failures. The standard culprits? Poor prompts that don’t specify edge circumstances. Lacking guardrails that permit the agent spiral. Weak termination standards that permit infinite loops. Unhealthy instrument interfaces that return ambiguous outputs. Blaming the mannequin is straightforward. Fixing your orchestration layer is difficult.

Why this issues: When groups default to “the mannequin isn’t ok,” they waste time ready for the subsequent mannequin launch as a substitute of fixing the precise failure level. Agent issues may be solved with higher prompts, clearer instrument contracts, and tighter execution boundaries.

False impression #7: “Agent Analysis Is Simply Mannequin Analysis”

Actuality: Brokers should be evaluated on conduct, not outputs. Traditional machine studying metrics like accuracy or F1 scores don’t seize what issues. Did the agent select the precise motion? Did it cease when it ought to have? Did it get better gracefully from errors? It’s worthwhile to measure determination high quality, not textual content high quality. Meaning monitoring tool-selection accuracy, loop termination charges, and failure restoration paths.

Why this issues: You’ll be able to have a high-quality language mannequin produce horrible agent conduct. In case your analysis doesn’t measure actions, you’ll miss crucial failure modes: brokers that decision the improper APIs, waste tokens on irrelevant loops, or fail with out elevating errors.

Brokers Are Methods, Not Magic

Probably the most profitable agent deployments deal with brokers as programs, not intelligence. They succeed as a result of they impose constraints, not as a result of they belief the mannequin to “determine it out.” Autonomy is a design selection. Reliability is a monitoring apply. Failure is a system property, not a mannequin flaw.

Should you’re constructing brokers, begin with skepticism. Assume they’ll fail in methods you haven’t imagined. Design for containment first, functionality second. The hype guarantees autonomous intelligence. The truth requires disciplined engineering.